Track 2: Action Recognition from Dark Videos

Register for this track

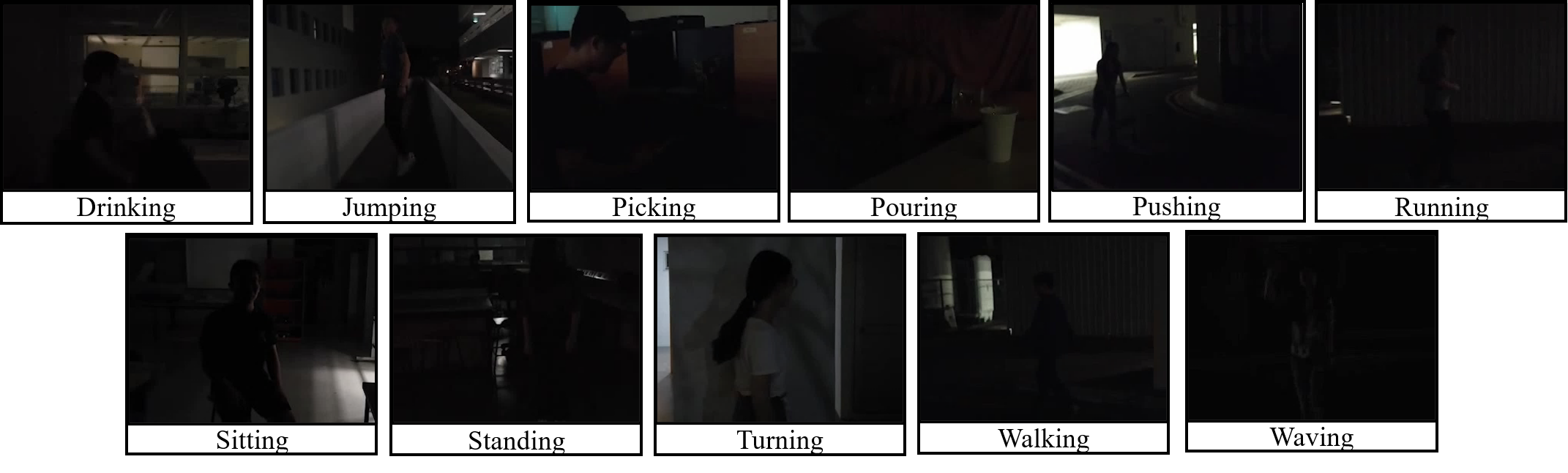

With the advances in computer vision technologies, especially video-based technologies, various automatic video-based tasks have received considerable attention. There have been increasing applications of these technologies in various fields, which include surveillance and smart homes. The advances can be credited in part to a rising number of video-based datasets, designed for a range of video-based tasks such as action recognition, localization and segmentation. Although much progress has been made, the progress is mostly limited to videos shot under “clear” environments, with normal illumination and contrast. Such limitation is partly due to the fact that current benchmark video-based datasets are collected from either crowdsourcing platforms or from public web video platforms directly. Either source contains mostly “clear” videos, where the actions are identifiable. However, there has been an increasing number of scenarios where videos shot under normal illumination may not be available. One notable scenario is night security surveillance, where security cameras are usually placed at alleys or fields with little lighting. The actions captured are hard to identify even by the naked eye. Although additional sensors, such as infrared imaging sensors, could be utilized for recognizing action in these environments, their cost prohibits the large scale deployment of these sensors. It is therefore greatly beneficial to explore possible video-based technologies that are robust to darkness, extracting effective action features from dark videos, which would benefit in various downstream applications.

Over the past few years, there has been a remarkable rise of research interest with regards to computer vision tasks in dark environments, which include dark face recognition and dark image enhancement. More recently, such research interests have been expanded to the video domain, especially towards darkness enhancement. However, it is noted that under image domain, visually enhanced dark images may not result in better classification accuracies when the same techniques are applied. Therefore it seems uncertain if the visually enhanced videos could necessarily result in better action recognition accuracies. Furthermore, with massive publicly available clear videos, it also seems promising to utilize them in some way, with possible pre-processing or post-processing steps, to capture actions with high robustness towards darkness.

Therefore, UG2+ Challenge 2 aims to promote video-based action recognition algorithms’ robustness with special focus on dark videos utilizing Semi-Supervised Algorithms which could utilize both clear videos and dark videos. Furthermore, given the high cost of video annotation, UG2+ Challenge 2 aims to elevate the importance of eco-friendly and efficient learning by decreasing annotation cost. UG2+ Challenge 2 would therefore provide a curated list of labeled clear videos from common and current public video datasets with unlabeled dark videos for training. The labeled videos are collected from the following video datasets: HMDB51[2], UCF101[3], Kinetics-600[4], and Moments in Time[5]. Evaluation would be conducted on a hold-out set of dark videos. The dark data for our challenge is built based on the ARID dataset [1], the first dataset dedicated to action recognition in the dark.

In UG2+ Challenge 2, the participant teams are allowed to use external training data that are not mentioned above, including self-synthesized or self-collected data; but they must state so in their submissions ("Method description" section in Codalab). The ranking criteria will based on i) Top-1 accuracy on the testing set and ii) Cross-Entropy Loss with respect to the groud truth label of the testing set.

Organizing Committee:

Yuecong Xu (Institute for Infocomm Research, A*STAR, Singapore / Nanyang Technological University, Singapore)

Zhenghua Chen (Institute for Infocomm Research, A*STAR, Singapore)

Jianfei Yang (Nanyang Technological University, Singapore)

Haozhi Cao (Nanyang Technological University, Singapore)

Jianxiong Yin (NVIDIA AI Tech Center, Singapore)

We would like to acknowledge the support from Institute for Infocomm Research, A*STAR, Singapore and Nanyang Technological University, Singapore over the course of this Challenge as well as the construction of the ARID dataset. We would also like to spcially thank Asst. Prof. Qianwen Xu from KTH Royal Institute of Technology, Sweden for her support!

References:

[1] Xu, Y., Yang, J., Cao, H., Mao, K., Yin, J. and See, S., 2020. ARID: A New Dataset for Recognizing Action in the Dark. arXiv preprint arXiv:2006.03876.

[2] Jhuang, H., Garrote, H., Poggio, E., Serre, T. and Hmdb, T., 2011, November. A large video database for human motion recognition. In Proc. of IEEE International Conference on Computer Vision (Vol. 4, No. 5, p. 6).

[3] Soomro, K., Zamir, A.R. and Shah, M., 2012. UCF101: A dataset of 101 human actions classes from videos in the wild. arXiv preprint arXiv:1212.0402.

[4] Kay, W., Carreira, J., Simonyan, K., Zhang, B., Hillier, C., Vijayanarasimhan, S., Viola, F., Green, T., Back, T., Natsev, P. and Suleyman, M., 2017. The kinetics human action video dataset. arXiv preprint arXiv:1705.06950.

[5] Monfort, M., Andonian, A., Zhou, B., Ramakrishnan, K., Bargal, S.A., Yan, T., Brown, L., Fan, Q., Gutfreund, D., Vondrick, C. and Oliva, A., 2019. Moments in time dataset: one million videos for event understanding. IEEE transactions on pattern analysis and machine intelligence, 42(2), pp.502-508.

Footer